#general

2026-05-01

RS

00:02:51

推推!之前身邊有幾個親友也遇到類似的事情,這真的是重要的議題

Otis Wu

01:18:31

@otisemailsave has joined the channel

shinyee

05:52:32

@ob4106702 has joined the channel

Yragem

06:56:32

謝謝,但我一個人力量有限,希望可以集結更多人的力量和專業!

bestian

07:51:00

@uoly2009 您好,我覺得您的專案很有意義!

為此我打造了一個Line bot後端模板,歡迎您或您團隊找到的工程師,加以利用,來進行後續的開發。

https://github.com/bestian/hono-line-bot-template

為此我打造了一個Line bot後端模板,歡迎您或您團隊找到的工程師,加以利用,來進行後續的開發。

https://github.com/bestian/hono-line-bot-template

RS

11:53:11

@uoly2009 如果不介意的話,summit 這邊想幫忙把網站連結轉發到 threads ٩( 'ω' )و

Yragem

13:59:44

麻煩您們了,謝謝支持,萬分感激

mrorz

15:34:39

我覺得緊急應對指南裡面的經驗非常寶貴,應該可以幫助非常多人!

2026-05-02

johannr

13:18:00

@johannr has joined the channel

陳樂樂

15:29:16

@goodlele961011.428 has joined the channel

2026-05-03

Tony Chou

23:23:59

@cool21540125 has joined the channel

2026-05-04

Otis Wu

14:39:56

手機應該不會被打電話吧?

ballfish

22:17:10

您好,g0v Summit 絕對不會致電給您,亦不會要求您操作 ATM,請放心 ,並請留意詐騙電話🙏

Kernel Rootsevelt

23:31:35

@kernelrootsevelt has joined the channel

2026-05-05

sde730fu

01:40:54

@sde730fu has joined the channel

shane_qwq

08:40:49

@fom4921555 has joined the channel

蛤啊

19:48:12

@npcwi0119 has joined the channel

2026-05-06

sup6yj3a8

01:34:16

@sup6yj3a8 has joined the channel

Jim

15:51:52

@tio212 has joined the channel

Meaghan Ferguson

19:10:29

@mfergss00 has joined the channel

2026-05-07

Margaret Hung

06:20:20

@mhung120 has joined the channel

yas

09:14:20

@yasuyoshi.01 has joined the channel

2026-05-08

Juliana Jin

01:51:57

@juliana.jin has joined the channel

dion12389

07:34:34

@dion12389 has joined the channel

Tommie

18:46:06

Hi there, I want to share an exciting opportunity that may be of interest. The Serpentine (UK) has just launched the open call for the inaugural Future Art Ecosystems (FAE) R&D Fellowship.

This is a six-month, low-residency programme supporting four practitioners working at the intersection of art and advanced technologies. Fellows will receive a £10,000 stipend, bespoke mentorship, and a significant public platform through Serpentine, with a cohort shaped around a defined research question and active R&D development.

*The fellowship is open to international applicants with travel and accommodation for three London weekend intensives.*

The open call is now live and you can apply via our website:

https://www.serpentinegalleries.org/whats-on/future-art-ecosystems-rd-fellowship-art-x-convergence/

This is a six-month, low-residency programme supporting four practitioners working at the intersection of art and advanced technologies. Fellows will receive a £10,000 stipend, bespoke mentorship, and a significant public platform through Serpentine, with a cohort shaped around a defined research question and active R&D development.

*The fellowship is open to international applicants with travel and accommodation for three London weekend intensives.*

The open call is now live and you can apply via our website:

https://www.serpentinegalleries.org/whats-on/future-art-ecosystems-rd-fellowship-art-x-convergence/

Tommie

18:46:06

Hi there, I want to share an exciting opportunity that may be of interest. The Serpentine (UK) has just launched the open call for the inaugural Future Art Ecosystems (FAE) R&D Fellowship.

This is a six-month, low-residency programme supporting four practitioners working at the intersection of art and advanced technologies. Fellows will receive a £10,000 stipend, bespoke mentorship, and a significant public platform through Serpentine, with a cohort shaped around a defined research question and active R&D development.

*The fellowship is open to international applicants with travel and accommodation for three London weekend intensives.*

The open call is now live and you can apply via our website:

https://www.serpentinegalleries.org/whats-on/future-art-ecosystems-rd-fellowship-art-x-convergence/

This is a six-month, low-residency programme supporting four practitioners working at the intersection of art and advanced technologies. Fellows will receive a £10,000 stipend, bespoke mentorship, and a significant public platform through Serpentine, with a cohort shaped around a defined research question and active R&D development.

*The fellowship is open to international applicants with travel and accommodation for three London weekend intensives.*

The open call is now live and you can apply via our website:

https://www.serpentinegalleries.org/whats-on/future-art-ecosystems-rd-fellowship-art-x-convergence/

m80126colin

21:49:05

@m80126colin has joined the channel

Vendula Subert

23:42:54

@vendulasubert has joined the channel

2026-05-09

zxc0624a

00:09:17

@zxc0624a has joined the channel

k66

10:30:05

@k66inthesky has joined the channel

chan

16:26:26

@snusae has joined the channel

2026-05-10

ycliu0509

22:38:02

@ycliu0509 has joined the channel

2026-05-11

Raymond Chen

00:40:43

@raymond085 has joined the channel

2026-05-12

Yragem

00:35:24

大家好,之前在這裡分享的 PCIT收款人帳戶安全倡議有重要更新 🎉

提案已經在 join.gov.tw 正式通過審核,今天進入附議階段!

60天內達到 5,000 人附議,金管會就必須正式回應。

提案連結:

https://join.gov.tw/idea/detail/ba071237-01f4-40c4-a322-b1c0b16111dd

———

幾個重要更新:

:one: 今年一月曾有同樣的提案,251 人附議未立案。上一次的支持者提供了一個關鍵資訊:加拿大的 Interac e-Transfer 已經實現了收款人入帳選擇權,證明 PCIT 在技術上完全可行。

:two: 網站已搞到 Cloudflare Pages,新網址:pcit-tw.pages.dev

3️⃣ Line Bot 對話腳本已完成,感謝 bestian 的模板支援

———

現在最需要社群的協助:

📣 擴散

一天只要 100 人花五分鐘附議,

60天就能達標。

如果你願意分享到自己的社群,

對這個提案意義重大。

💻 技術

有興趣協助 Line Bot 開發的工程師,

腳本和架構都已準備好。

GitHub:https://github.com/Lilian-000/pcit-linebot

📝 經驗

有沒有人有 join.gov.tw 提案衝 5000 人的經驗?

想知道什麼時機點和策略最有效。

謝謝大家 :pray:

提案已經在 join.gov.tw 正式通過審核,今天進入附議階段!

60天內達到 5,000 人附議,金管會就必須正式回應。

提案連結:

https://join.gov.tw/idea/detail/ba071237-01f4-40c4-a322-b1c0b16111dd

———

幾個重要更新:

:one: 今年一月曾有同樣的提案,251 人附議未立案。上一次的支持者提供了一個關鍵資訊:加拿大的 Interac e-Transfer 已經實現了收款人入帳選擇權,證明 PCIT 在技術上完全可行。

:two: 網站已搞到 Cloudflare Pages,新網址:pcit-tw.pages.dev

3️⃣ Line Bot 對話腳本已完成,感謝 bestian 的模板支援

———

現在最需要社群的協助:

📣 擴散

一天只要 100 人花五分鐘附議,

60天就能達標。

如果你願意分享到自己的社群,

對這個提案意義重大。

💻 技術

有興趣協助 Line Bot 開發的工程師,

腳本和架構都已準備好。

GitHub:https://github.com/Lilian-000/pcit-linebot

📝 經驗

有沒有人有 join.gov.tw 提案衝 5000 人的經驗?

想知道什麼時機點和策略最有效。

謝謝大家 :pray:

irvin

2026-05-12 02:48:45

可以試試發訊息給曾經講過詐騙議題的網紅,請他們代為分享

然後海巡警示帳號相關的貼文跟新聞,去下面貼

然後海巡警示帳號相關的貼文跟新聞,去下面貼

bestian

2026-05-12 06:15:57

已附議了!也可以請已附議的人去自己的社群網站幫忙轉發。

ronnywang

2026-05-12 07:52:35

網站部份建議加一個 og url ,這樣在社群網站分享時有圖片散播性比較強

bestian

2026-05-12 11:37:14

@uoly2009 請問該網站本身有公開的repo嗎? 有的話有興趣的社群朋友就可以直接發PR過去。

pcit-tw.pages.dev

pcit-tw.pages.dev

Yragem

2026-05-12 21:12:46

@irvin 謝謝 irvin的寶貴建議,加入60天行動清單裡。

Yragem

2026-05-12 21:14:05

@ronnywang 謝謝你寶貴的建議。我馬上加上 og 標籤。

Yragem

2026-05-12 22:21:41

bestian

2026-05-14 15:36:56

Yragem

00:35:24

大家好,之前在這裡分享的 PCIT收款人帳戶安全倡議有重要更新 🎉

提案已經在 join.gov.tw 正式通過審核,今天進入附議階段!

60天內達到 5,000 人附議,金管會就必須正式回應。

提案連結:

https://join.gov.tw/idea/detail/ba071237-01f4-40c4-a322-b1c0b16111dd

———

幾個重要更新:

:one: 今年一月曾有同樣的提案,251 人附議未立案。上一次的支持者提供了一個關鍵資訊:加拿大的 Interac e-Transfer 已經實現了收款人入帳選擇權,證明 PCIT 在技術上完全可行。

:two: 網站已搞到 Cloudflare Pages,新網址:pcit-tw.pages.dev

3️⃣ Line Bot 正在緩速建置中,感謝 bestian 的模板支援

———

現在最需要社群的協助:

📣 擴散

一天只要 100 人花五分鐘附議,60天就能達標。

如果你願意分享到自己的社群,對這個提案意義重大。

💻 技術

邀請有興趣協助 Line Bot 開發的工程師一起讓這個理想慢慢落地。

GitHub:https://github.com/Lilian-000/pcit-linebot

📝 經驗

有沒有人有 join.gov.tw 提案衝 5000 人的經驗?想知道什麼時機點和策略最有效。

謝謝大家 :pray:

提案已經在 join.gov.tw 正式通過審核,今天進入附議階段!

60天內達到 5,000 人附議,金管會就必須正式回應。

提案連結:

https://join.gov.tw/idea/detail/ba071237-01f4-40c4-a322-b1c0b16111dd

———

幾個重要更新:

:one: 今年一月曾有同樣的提案,251 人附議未立案。上一次的支持者提供了一個關鍵資訊:加拿大的 Interac e-Transfer 已經實現了收款人入帳選擇權,證明 PCIT 在技術上完全可行。

:two: 網站已搞到 Cloudflare Pages,新網址:pcit-tw.pages.dev

3️⃣ Line Bot 正在緩速建置中,感謝 bestian 的模板支援

———

現在最需要社群的協助:

📣 擴散

一天只要 100 人花五分鐘附議,60天就能達標。

如果你願意分享到自己的社群,對這個提案意義重大。

💻 技術

邀請有興趣協助 Line Bot 開發的工程師一起讓這個理想慢慢落地。

GitHub:https://github.com/Lilian-000/pcit-linebot

📝 經驗

有沒有人有 join.gov.tw 提案衝 5000 人的經驗?想知道什麼時機點和策略最有效。

謝謝大家 :pray:

- 🙌2

- 💡3

- 🔥2

irvin

2026-05-12 02:48:45

可以試試發訊息給曾經講過詐騙議題的網紅,請他們代為分享

然後海巡警示帳號相關的貼文跟新聞,去下面貼

然後海巡警示帳號相關的貼文跟新聞,去下面貼

bestian

2026-05-12 06:15:57

已附議了!也可以請已附議的人去自己的社群網站幫忙轉發。

ronnywang

2026-05-12 07:52:35

網站部份建議加一個 og url ,這樣在社群網站分享時有圖片散播性比較強

bestian

2026-05-12 11:37:14

@uoly2009 請問該網站本身有公開的repo嗎? 有的話有興趣的社群朋友就可以直接發PR過去。

pcit-tw.pages.dev

pcit-tw.pages.dev

Yragem

2026-05-12 21:12:46

@irvin 謝謝 irvin的寶貴建議,加入60天行動清單裡。

Yragem

2026-05-12 21:14:05

@ronnywang 謝謝你寶貴的建議。我馬上加上 og 標籤。

Yragem

2026-05-12 22:21:41

bestian

2026-05-14 15:36:56

Yragem

00:37:33

ㄧ

irvin

02:48:45

可以試試發訊息給曾經講過詐騙議題的網紅,請他們代為分享

然後海巡警示帳號相關的貼文跟新聞,去下面貼

然後海巡警示帳號相關的貼文跟新聞,去下面貼

zlu

03:38:16

@zlu has joined the channel

bestian

06:15:57

已附議了!也可以請已附議的人去自己的社群網站幫忙轉發。

ronnywang

07:52:35

網站部份建議加一個 og url ,這樣在社群網站分享時有圖片散播性比較強

bestian

11:37:14

@uoly2009 請問該網站本身有公開的repo嗎? 有的話有興趣的社群朋友就可以直接發PR過去。

pcit-tw.pages.dev

pcit-tw.pages.dev

Dong

12:38:32

【贈票|專屬於 g0v 參與者的 g0v Summit 2026 門票!】

g0v Summit 2026 反客為主,邀請所有「返客」回來相聚!

只要你曾參與 g0v 活動,並在活動中提案,或做過任何 g0v 貢獻(程式碼、共筆、設計等等),現在來填表,審核後就能獲得免費門票!

若已經購票報名的朋友也不用擔心,你可以選擇全額退票換領免費票或領取年會大禮包喔。

兩年一次的 g0v Summit,表單填下去,反客為主,返客為主!

表單這邊填:

https://forms.gle/JsXGcSnfaV9qu6Qf9

g0v Summit 2026 反客為主,邀請所有「返客」回來相聚!

只要你曾參與 g0v 活動,並在活動中提案,或做過任何 g0v 貢獻(程式碼、共筆、設計等等),現在來填表,審核後就能獲得免費門票!

若已經購票報名的朋友也不用擔心,你可以選擇全額退票換領免費票或領取年會大禮包喔。

兩年一次的 g0v Summit,表單填下去,反客為主,返客為主!

表單這邊填:

https://forms.gle/JsXGcSnfaV9qu6Qf9

6

6 3

3- 2

- 👍2

Jenyu

16:11:10

@jenyu.p has joined the channel

weare

18:39:16

@weare has joined the channel

Yragem

21:12:46

@irvin 謝謝 irvin的寶貴建議,加入60天行動清單裡。

Yragem

21:14:05

@ronnywang 謝謝你寶貴的建議。我馬上加上 og 標籤。

Evelyn Siu

22:08:16

@evelyn.siu12 has joined the channel

Yragem

22:21:41

2026-05-13

Chen Tyson

02:10:37

最近做了一個 browser-native agent incident replay runtime,算 agent 時代的航空記錄器。對os-level enforcement 的 agent 行為的replay、evidence與可裁決性,有興趣的可以在 Chrome-based 的瀏覽器上玩玩看。

https://slashlife.ai/agent-gate/demo

https://slashlife.ai/agent-gate/demo

SlashLife AI

SlashLife AI runs your AI agents through isolated worlds and returns a signed pass/fail verdict before release. CI-native, self-hosted in your AWS — no prompts, outputs, or secrets leave your VPC.

Chen Tyson

2026-05-19 01:31:47

Chen Tyson

02:10:37

最近做了一個 browser-native agent incident replay runtime,算 agent 時代的航空記錄器。對os-level enforcement 的 agent 行為的replay、evidence與可裁決性,有興趣的可以在 Chrome-based 的瀏覽器上玩玩看。

https://slashlife.ai/agent-gate/demo

https://slashlife.ai/agent-gate/demo

Chen Tyson

2026-05-19 01:31:47

2026-05-14

bestian

15:36:56

蔡朝翔

15:44:42

@purimsean0827 has joined the channel

bestian

16:57:06

大家好,<#C2Q1M4N1J> 的meta-polis上線了,歡迎把您覺得重要的議題po上去,以透過廣泛傾聽和意見綜整的方式,收集各方的意見並找到可能的共識~謝謝~

https://www.vtaiwan.tw/polis

https://www.vtaiwan.tw/polis

bestian

16:57:06

大家好,<#C2Q1M4N1J> 的meta-polis上線了,歡迎把您覺得重要的議題po上去,以透過廣泛傾聽和意見綜整的方式,收集各方的意見並找到可能的共識~謝謝~

https://www.vtaiwan.tw/polis

https://www.vtaiwan.tw/polis

- 💡2

nobody7548

19:27:54

@nobody7548 has joined the channel

yi.sun1_g0v

19:32:11

@yi.sun1_g0v has joined the channel

ian chou

19:35:13

@ertiach has joined the channel

2026-05-15

Drew Marshall

05:06:48

@drewjoma has joined the channel

Benjamin Pham Roodman

10:31:34

@bnroodman has joined the channel

2026-05-16

peichin

09:53:48

@peichin has joined the channel

caffeine

10:50:29

@henryjheng.dev has joined the channel

Krishna Maharjan

17:20:10

@thekopkrish has joined the channel

2026-05-17

corrietang

00:45:19

@corrietang has joined the channel

RFR

22:03:04

@rraferoot has joined the channel

chewei 哲瑋

22:47:07

g0v summit

g0v Summit 2026 是 g0v 台灣零時政府社群兩年一次的大聚會,也是全球公民黑客、公民科技的盛會。民主不只投票凍蒜,Summit 2026 邀你一起持續貢獻、全球連結,公民不打烊。

3

3- 2

- 3

chewei 哲瑋

22:47:07

:2026_summit_stool_l: g0v Summit 2026 倒數六天 ! 5/23-24

https://summit.g0v.tw/

https://summit.g0v.tw/

2026-05-18

Deal

04:57:32

@deal518.service has joined the channel

qiqihuang0831

12:33:43

嗨大家,這裡是 g0v Summit 2026 的場務組長 77,距離啥米已不到一個禮拜,如果你剛好在 5/22 有空閒時間的話,歡迎一起來到中研院協助 g0v Summit 的場佈(我們好需要你!!

77

嗨大家,我們目前 5/22 場佈的人力需求有點短缺,再麻煩大家如果可以的話可以呼朋引伴一起來完成 g0v Summit 的年會基礎建設了!

https://docs.google.com/forms/d/e/1FAIpQLScrUN8S9SFVuKoFEC7ApXCN1MbhlCc2hmYFSep67nQadvaSUw/viewform

- Forwarded from #summit-2026

- 2026-05-18 12:31:07

- 🙏4

qiqihuang0831

12:33:43

嗨大家,這裡是 g0v Summit 2026 的場務組長 77,距離啥米已不到一個禮拜,如果你剛好在 5/22 有空閒時間的話,歡迎一起來到中研院協助 g0v Summit 的場佈(我們好需要你!!

77

嗨大家,我們目前 5/22 場佈的人力需求有點短缺,再麻煩大家如果可以的話可以呼朋引伴一起來完成 g0v Summit 的年會基礎建設了!

https://docs.google.com/forms/d/e/1FAIpQLScrUN8S9SFVuKoFEC7ApXCN1MbhlCc2hmYFSep67nQadvaSUw/viewform

- Forwarded from #summit-2026

- 2026-05-18 12:31:07

Ted Shen

12:41:48

@tedshen.der has joined the channel

2026-05-19

Chen Tyson

01:31:47

林承慶

12:13:35

@ck10100395 has joined the channel

2026-05-20

Adam

01:00:14

@yadang890 has joined the channel

Gus Richardson

08:17:19

@gushrichardson has joined the channel

Junghyuk Han

11:48:12

@contact781 has joined the channel

Alex Hsieh

15:43:45

@alex880 has joined the channel

Yiyi

20:16:12

@yiyilee has joined the channel

iiitasan

21:17:04

@iiitasan has joined the channel

wc.1233.chan

21:21:20

@wc.1233.chan has joined the channel

呂佳旻

21:41:02

@chiamin0207 has joined the channel

俞凱倫

22:11:59

@kelen3299 has joined the channel

annewang.yt

23:23:46

@annewang.yt has joined the channel

2026-05-21

jiy850378

00:34:36

@jiy850378 has joined the channel

newtaiwanstar

06:36:51

@newtaiwanstar has joined the channel

高蓉蓉

09:40:26

@a0952237374 has joined the channel

Betty

10:19:23

@b21772134 has joined the channel

Yuyi Chang

10:42:46

@yuyi.chang has joined the channel

nakamura200118

11:08:13

@nakamura200118 has joined the channel

macpaul

15:25:08

- 2

- 1

1

1 1

1

竟然有摘要!太可愛了吧XD

macpaul

2026-05-21 16:14:21

我是AI做圖魔人,可以生好讀版懶人包(X)

RS

15:54:03

竟然有摘要!太可愛了吧XD

macpaul

16:14:21

我是AI做圖魔人,可以生好讀版懶人包(X)

Nathan

17:57:14

@me1128 has joined the channel

nathanlee8189

18:39:37

@nathanlee8189 has joined the channel

Chu Edwin

22:58:38

@edwinchu105 has joined the channel

2026-05-22

Tuo-Hong Chen

00:57:25

@c.tuohong has joined the channel

Billy Lui

11:30:55

@billyluisc has joined the channel

yufang

11:49:17

@yufang has joined the channel

mina930815

13:04:34

@mina930815 has joined the channel

PeiNi

15:42:44

@pieniii has joined the channel

za91102

17:27:55

@za91102 has joined the channel

K

17:54:29

這很好,但是複選框旁邊寫著“你會給我發送垃圾郵件”😅,如果我不勾選複選框,就無法繼續。

bleach1827

20:39:58

@bleach1827 has joined the channel

Chia-ying

20:54:55

@jennifer7410 has joined the channel

Paper

21:28:05

@faition2 has joined the channel

KYS

21:32:28

@lavender6996 has joined the channel

clong

21:50:20

@steven7254 has joined the channel

zyy11220

22:56:45

@zyy11220 has joined the channel

ytbcd2b52b

23:47:12

@ytbcd2b52b has joined the channel

2026-05-23

tiffany900709

00:11:33

@tiffany900709 has joined the channel

tzu

00:22:40

@tzu has joined the channel

Sciuridae

01:19:54

@g0v660 has joined the channel

leung3sir

07:44:59

@leung3sir has joined the channel

atstuart

08:07:46

@atstuart has joined the channel

kiang

08:55:46

為外國人設計的注音符號學習網頁 👉 zhuyin-learning.vercel.app

來源

https://www.threads.com/@yui.uiuxrookie/post/DYo2x_zke1V

來源

https://www.threads.com/@yui.uiuxrookie/post/DYo2x_zke1V

- 1

kiang

08:55:46

為外國人設計的注音符號學習網頁 👉 zhuyin-learning.vercel.app

來源

https://www.threads.com/@yui.uiuxrookie/post/DYo2x_zke1V

來源

https://www.threads.com/@yui.uiuxrookie/post/DYo2x_zke1V

elsa.sapporo

09:29:59

@elsa.sapporo has joined the channel

irvin

09:44:15

youtube.com

Enjoy the videos and music you love, upload original content, and share it all with friends, family, and the world on YouTube.

3

3

maxime.cuillerier

09:47:56

@maxime.cuillerier has joined the channel

小小鳥

09:52:37

@ymkuo552 has joined the channel

issacbyming

09:54:11

@issacbyming has joined the channel

jockettey

10:02:24

@jockettey has joined the channel

viktor

10:47:05

提醒一下學生票是可以參加交流聚的喔!如果是用數位憑證皮夾,票券裡交流聚那欄有可能寫錯成 false,不好意思~

viktor

10:47:05

提醒一下學生票是可以參加交流聚的喔!如果是用數位憑證皮夾,票券裡交流聚那欄有可能寫錯成 false,不好意思~

銘彥 Bîng-gān

10:47:22

@liho has joined the channel

viktor

11:38:28

https://g0v.hackmd.io/@summit2026/notes

Summit 2026 議程共筆

Summit 2026 議程共筆

HackMD

# g0v Summit 2026 Collaboration Notes Overview - [議程表 Agenda](<https://summit.g0v.tw/2026/agenda>) -

1

1- 1

viktor

11:38:28

https://g0v.hackmd.io/@summit2026/notes

Summit 2026 議程共筆

Summit 2026 議程共筆

u.o.p.e.l.t

12:33:37

@u.o.p.e.l.t has joined the channel

Jack Broome

15:33:27

@jack.broome has joined the channel

wittyegrin

16:07:03

@wittyegrin has joined the channel

雪泥

19:13:44

明天記得帶

雪泥

19:13:44

明天記得帶

雪泥

19:14:21

*Badge*

雪泥

19:14:21

*Badge*

雪泥

19:14:31

唷6ω6

evchen

20:13:36

@evchen has joined the channel

evgeniiachen

20:23:16

@evgeniiachen has joined the channel

chewei 哲瑋

21:50:45

Channel List

頻道傳送門

頻道傳送門

chewei 哲瑋 _

*g0v Slack Channel Guide 頻道傳送門*

.Global & Local:50+ 個頻道

.Infra / G0vernace:50+ 個頻道

.Edu / Learning / Health / Livingl:60+ 個頻道

.Open Gov & Projects:100+ 個頻道

*Global & Local*

<#C02G2SXKX> 社群大廳,可以在這邊提出任何問題! G0v City Hall / Plaza

<#C1CHAA0QL> <#C02G2SXKX> English version

<#CDE487J9K> <#C02G2SXKX> Japanese version

<#CDDNVDT8U> <#C02G2SXKX> Korean version

<#C08JJ5U3LMN>

<#CV4P9953R>

<#C046KHB3FJ4> 嘉義

<#C2A8F3JAH> 台南討論群組!

<#C04SLHPKNV7> 高雄討論群組!

<#C093UNUEDNV> 屏東討論群組!

<#C02QGDPCFGS> 小琉球討論群組!Liuqiu

<#C7KEUPGG1> 台中討論群組!

<#C08NXM19H9V> 彰化討論群組!

<#C08PG7VMKA7> 雲林討論群組!

<#C06BCEL2P3R> 南投討論群組!

<#C0ATM65TJBU>

<#C044J653KLL> 花蓮討論群組!

<#C09A8PB1W7K>

<#C08B4G9UDBQ>

<#C06E8UFE69Z> 金門討論群組!

<#C03JGA6FSKF> 馬祖討論群組!

<#C06PNN6L067> 基隆討論群組

<#C09CF786H7U>

<#C09FH5PDX7C>

<#C0B02271G1J>

<#C0AQAGPV2CT>

<#C06HSQL9C1W> 關於如何移住、回到故鄉,以及城市人如何與地方一起打造新故鄉的實踐 / 頻道有很多關注宜蘭的朋友

<#C08BPK3F3UZ> support for local communities or initiatives 有相關想法與構想,歡迎到頻道討論 ~

<#C5FGJFDMW>

<#CDA9C2JTG>

<#CQDRK4GNM> https://github.com/g0vhk-io

<#C017QFJ5AM8> 飛行傘計畫

<#CHA2QJP9A>

<#C09K81TDZ1N>

<#C012HCFD281>

<#C01TT6ENVKR>

<#C05R1MF0MNY> 泰國與泰語

<#C63V7JB2P>

<#C06J7EG8UAH> 越南與越語

<#C06D4RE849Z>

<#C07J5QD92Q6>

<#C08K2DD907N>

<#C06GQ2UPQ1M> 澳洲

<#C09V6JXP92B>

<#C0A3B3BHXSM>

<#C04A4P821> 想在美國協作的可以來這邊聊天喔!

<#C05FZAX1Z9U> g0v 矽谷灣區小聚

<#C0AL6T9KSKH>

<#C0AAS101065>

<#C096CE120EA>

<#C090GTHDLFL>

<#C034X4820CC>

<#C0AM01D2UGM>

<#C0944NVFKKP>

<#C06K7ST2GMA> 德國與德語區

<#C088ZNXG4PR>

<#C023C1QMKRR>

<#C0AK02J8HSP>

<#C0ALNAV0SAW>

<#C08KSRFBNNM>

<#C0AM5JTGESE>

<#C04F0PN57NV> https://github.com/g0v-it

<#C084CU74J> 國際交流工作小組 g0v international、國際交流資訊都在這

<#C01Q8THBQG6> Connected to Code for Korea & Code for Japan

<#C0616EQS35G> Internet / digital governance

*Infra / G0vernace*

<#CGU1SLHNH> 歡迎自由灌水閒聊 😄 Feel free to chat here.

<#C012AG0SC0H> 歡迎來到 g0v-slack,這是自我介紹的頻道,可以讓大家認識你唷!Welcome to g0v slack! Please introduce yourself to g0v community ❤️

<#C048NKSFZEF> 提供各式各樣的頻道簡介,以及傳送門 G0v slack channels portal.

<#C0149FAJS1L> 令人驚奇的零時政府。找專案,找提案,找共筆,找幫手 一站搞定

<#C082BAJ7E90> 協助坑主推展專案,整理相關執行經驗與建議 Assist project leaders in promoting their projects, organizing related execution experiences and suggestions

<#C07R2PMV7K2>

<#C090XEG90F8>

<#C08FQ844DLK> NPOHUB 大小事

<#C0385B90D> 揪松團相關活動討論(黑客松、基礎松)https://jothon.g0v.tw/

<#C08KQGMK1NE> 蒐集線上活動籌辦事務

<#C08BPK3F3UZ>

<#C06ARNDP3CN>

<#C0AKDCAC0EA> 影響力評估

<#C08LV45P87L> 社群護照

<#C0AF1QXKKE1>

<#C087CSHFK53> 申請擺攤、出攤協力

<#C0ASRQF7AUA>

<#C088N2XFVA8>

<#C06V97CAH19>

<#C073T6J5J2Z>

<#CBNQXSAP7> 翻譯頻道 i18n + l10n—translate everything.

<#C0483Q7ALN6> 社群翻譯語彙庫 (glossary),從軟體在地化需求出發,收集個別社群成員、在各自筆譯/口譯/翻譯專案使用的詞彙前進,並希望收錄不只一個語言。

<#C04L3MK0K1V> 專案取名稱的互助頻道 Channel for Project Naming

<#C05CPF3DG1E> 諧音梗交流 Pun & Fun

<#C06CMKFCM96> 心情抒發頻道 🙂

<#C9WFAPPV5> 致力於新參者的體驗流程 Dedicated to Newcomer Experience Flow

<#C05326H3S72> g0v AI 機器人 aka 找專案分類帽

<#C069ARZJNE9> 社群虛擬記者分享社群動態

<#C01KQ3ES98U> 自動履歷製造機(with hello g0v)

<#C03PL9TK83A> 零時先輩

<#C0386M58S> 社群基礎建設開發維護,一起來協力! Community Infrastructure Development & Maintenance

<#C433NEJSJ> g0v 各網站的狀態通知頻道

<#CF3JH3H1C> g0v 網域大小事、網域申請

<#CSGKA8G75> g0v GitHub 大小事

<#C0G0T65S9> 這是一個放 UI 的地方,有 github

<#C01RDCVDGHZ> g0v slack 大小事、申請 APP、治理機制討論

<#CV97224UW>

<#C01SHPD80UD> 跨平台訊息流通工具 Cross-Platform Information Flow Tools

<#C04HYS66X1D> 討論 Mastodon 伺服器

<#CBLASC4CF> HackMD 使用經驗與課題回報

<#CHPAZECAV> 討論社群治理 Discussion on Community Governance

<#C04S9VBTQCV> g0v.tw 網站社群治理,以及社群活動刊登至日曆的提案頻道

<#CE2HFQN67> 以 g0v 為主的學術研究 Academic research on g0v and community projects

<#C04HYS66X1D> creating g0v.social, a decentralized social network

<#CPKVDVD88> g0v sns 平台規範、發文討論區

<#C088TR4A709> 揪松團的 SNS 與受眾溝通

<#C30F846JU> g0v news

<#C02QA1JNHAR> g0v underground 零時電台 https://linktr.ee/g0vpodcast

<#CQZ8MV7A8> g0v Summit 年會的公開頻道 https://summit.g0v.tw/

<#C036C0ACSQ7> 十週年活動籌劃頻道 https://10th.g0v.tw/

<#C069MJZV85A> 摩茲工寮社群空間 https://moztw.org/space/

*Edu / Learning / Health / Living*

<#C0960M3B30V>

<#C8DEZ566S> 萌典、字典

<#C11GNUL95> 阿美語萌典

<#C0N9DK6JU> 愛台語 對漢字佮台羅誠熟手,做伙鬥校對巡喲

<#CD75A171D> ChhoeTaigi 台語辭典⁺

<#C075YHE8L06> 矽谷圖書館台灣書籍植入計畫 Silicon Valley Library Taiwan Book Insertion Project

<#C0AUQDSFYGG>

<#C08NDP85ASF> 民國 130 年的全國小學生人數推估湧現地圖,推估未來學校就學人口,探討校地轉型與民主辦學 A map projecting the distribution of Taiwan’s elementary school population in 2040

<#CN64A1FHA> 零時小學校「開源協作與教育工作」主頻道

<#C0250L50324> 公民科技貢獻者的專案與任務視覺化平台

<#C028JBN5H2B> 伴伴學社群頻道

<#C015L48LHRQ> 小草書屋的「幸福存摺」專案

<#C0182TQTVV2> 島島阿學 slack 網址:https://bit.ly/3yg5cFi

<#C0954PJJM55>

<#C01D21G7F0E> CoTeach 教案資源共享平臺

<#C024NAMF0CV> CourseAPI 開放式課程資訊匯流學院

<#C03GRV696RG> Lipoic 是一個致力於整合與改善遠距授課與線上教室的教育平台,並讓學生能不受空間限制學習知識,老師能更便利地傳授知識,並且我們也熱衷於開放文化的精神,與我們一起翻轉教育吧!

<#C06CQHHL0SW> 討論各校學生自治組織經驗

<#C06CQH8TNKD>

<#C03D2FWM57X> 社團招商與管理專案

<#C03EPT3A01E> 壓力排解平臺專案

<#C03E2FCP46Q> 高中歷史筆記共筆

<#C03DTBP3HGW> 文學創作網站—廢青天地

<#C03E2EZRB8C> 「職」凱瑞你 Carry your career

<#C03DX829G05>

<#C03E05DF935>

<#C03DX7X45EH> 校園營養午餐剩食計畫

<#C02RGBHUYKS> 高中生108課綱教育資源整合平台

<#C03DC2G2JFM> 108課綱學習歷程求生指南

<#CUCCK9353> 協助學生提高事務掌握能力

<#CL96QTF5G> 回音森林 語言發音校正 app

<#C068EDJ40V9>

<#C027VH2GXNW> 零時小學校 營隊活動頻道

<#C01JVF9FUKB> 零時小學校 講師與教案交流頻道

<#C01PLLJJP51>

<#C05475LAQ6B>

<#C04N42FJWHH> 大學課程資訊交流平臺

<#C04Q5NG2XT9> 臺大相關課程活動的頻道

<#C05AV83UHM1>

<#C0G0478DC>

<#C03859QD7> 設計師頻道

<#C5EEC5EEN>

<#C9V3KLLGH>

<#C06181VGWH2> 互動式 Linux 指令學習網站

<#C05BHSUT0TS>

<#C09ACNRUES1> 函數式程式設計 (Functional Programming) 交流社群,每月舉辦活動哦 !

<#C0923BV964F>

<#C055ZJBKZML>

<#C05PNGXC4KU>

<#C057R6D8UKE>

<#C06D320J537>

<#C02L3PNNV> 開源人年會

<#C0A04TMPP2A> 由青少年打造的創意程式社群 https://www.hackit.tw/zh-TW

<#C0241463T47> 開源跨平台串流媒體和錄影程式 obs 技術交流頻道

<#C09FAN97H> 零時樂團 :musical_score:

<#C09QQEHGYJU> 結合人工智慧(AI)與音樂理論的和聲學分析應用程式

<#CF5CMMSDS>

<#C022NUX0Z8D> 宅在家的生活資訊

<#C022299HZPC> 健康議題、健檢

<#C0999FDUDMW> 藥品仿單易讀化

<#C0136MDHEMB> 非官方健保卡讀卡機元件開發計劃

<#C4M4S24NS> 心理健康資源

<#C06LFU2NDD0>

<#C01LWGSJLDT>

<#C096TGRJTHP>

<#C87MZ9SUR> 動一動

<#C2Z5JG9G8> for hiking affair

<#C04AXQQQDEF> :camping: 全台露營區合法共 205 筆資料與線上地圖

<#CQASS2PL7> NGO 會務交流

*Open Gov & Projects*

<#C082GPK1Y1F> 數位民主研究案工作小組 g0v Digital Democracy Working Group

<#CUM4P4895> 關注台灣「開放政府行動方案」制定 Focus on Taiwan's "Open Government Action Plan"

<#C05V224CZKL> Public Money Public Code

<#CH79PR9FY> #金流百科(政治獻金、公投募集經費、標案、視覺化)

<#C067VNP6P88> 選舉相關的專案或想法

<#C040Y313L6P> 蒐集選舉看板

<#C048CBS8TUY> 候選人政見彙整平台

選前大補帖,專案另有設置 Slack https://join.slack.com/t/taiwanvotingguide/shared_invite/zt-20t7r7oo3-KmgRaARq_1qHo1VECY8ssQ

<#C0525UB7JCE> 隨著每年的選舉將會逐步更新,歡迎提供使用者回饋、許願!

<#C0AAKG273NH> 憲庭加好友 / 憲法法庭

<#CDRE0Q0CE> g0v 國會松、立法院資料 g0v Congress Hackathon, Legislative Yuan data

<#C0386PRQU> 國會、立法院

<#C03B427TG91>

<#C06HF2GMQSC>

<#C25J1PTFB> 修法協作器

<#C0QHLAGH5> 立委咖電喂

<#CGRJJK0M9>

<#C08F84BQMME> 請支援造冊_立委罷免

<#C04FLCD6YFP> 對刑法上修到二十歲有興趣的朋友歡迎參與喔!

<#C2Q1M4N1J> 法規與議題議論

<#C05MGLXK0AH>

<#C6HN8HRGD>

<#C049V0A9M35>

<#C017GBM9Z2S> 晶片身分證

<#C04P2TY1QSZ> 關注個資隱私議題的頻道 Channel focusing on personal data privacy issues

<#C01GX97MTT8> 想討論台灣已成立的數位發展部嗎

<#C012S9ABTL7> 總統盃黑客松

<#CSFDD01AN> 美國台灣觀測站

<#C081STFCWM7> 北加州國會議員攻略

<#C2PNGP8N8> 國家寶藏,號召志工一起深入世界各地的國家檔案局與圖書館,挖出台灣相關史料,並建立網路開放資料庫,供全民免費使用。This project aims to bring these documents within the sight of the public, so that everyone can forge their own stories that were never told before.

<#C02C6DA2L8Z>

<#CS16Q642K> 東亞女性權力行動歷程書寫 Documentation of East Asian women's empowerment movements

<#C02GD9FD1H8> 國際智庫基本資料 csv

<#C040NMX9UMS> 多語系圖卡

<#CV42198BE> Wikidata Taiwan 討論頻道

<#C0CBAUFV3> 資料

<#C06J59AE9GD>

<#C086LQXUE69> 網路黑魔法防禦術(Defence Against the Dark Arts),對抗詐騙的討論頻道,頻道名稱取自於哈利波特小說中霍格華茲的學校科目。

<#CNYM62P6X> 不實訊息來源蒐整

<#C2PPMRQGP> 真的假的 ! 謠言查證 Linebot :speech_balloon: Cofacts is a collaborative system connecting instant messages and fact-check reports together. It’s a grass-root effort fighting mis/disinformation in Taiwan.

<#C08KQ2HLJA2>

<#C02JTFZRNEP> 永續所得實驗室、永續捐贈議題

<#C2YQT8L4A> 社會福利議題與資料

<#C05DXC8CNGP> 開放企業永續資料庫 - ESG 檢測器

<#C06MKUR9N2F> 我們的目標是為倡議型NGO開拓財源,藉由蒐集企業捐款資訊和潛在合作方向,建立資料庫,為組織提供更多與企業合作的可能性。

<#CQS6318KU> 透明足跡 - 資訊公開透明,污染無所遁形

<#C4Z9BGHPZ> 討論跟勞工相關的公民議題

<#C069J08CXRS>

<#CUTQ3NF4K> ptt 不能亡,新版本測試中

<#CUTEBCBUH>

<#C03RAK46BEC> 零時道 :cat: Supercharge g0v & the future of civic innovation w/ DAO, web3, etc. https://da0.g0v.tw/

<#C046T7HT05U> Studi0 在做 Hypercerts 的 fund 實驗

<#C046ZFGTWQK> 是 incentivize g0v 的演進,做 organic 貢獻系統

<#C046EJH4P2B> 在做公共財與 DAO 的 podcast

<#C049L7M5X9S> 每月持續有讀書會

<#C047A5734FK> 在做 web3 的任務獎勵系統

<#C04FRRV17GF> 在奠定 da0 的基礎設施

<#C051REPUTSS> 是跟 dark matter labs 在AI上的初期對話

<#C04UZ3JNH9A> 在gov自治組織中參與全球去中心化科學浪潮,介紹探索去中心化科學的可能

<#C0525SELHFY> 跟以太坊的PSE合作,講零知識證明

<#C051U8RSTHV> 討論 2023 林茲電子藝術節奧地利辦黑客松

<#C05BDAUDGP8> 這是一個關注數位科技如何應用於「場所營造」(place-making)的小坑洞。名字是日文「地方」之意,常見於台灣也很熟悉的「地方創生」(ちほうそうせい, chihō sōsei)。

<#C0483Q7ALN6> 軟體在地化.翻譯詞彙庫計畫

<#C59M1NZV2> middle2 是一個開放原始碼的 PaaS 平台

<#C04GALK46SC> 推廣自架伺服器

<#C012MPC6GQ4> AWS 使用者交流頻道

<#C091S7KH8> g0v零時空污觀測網開發

<#C055GLJS94G> 開源 DIY 空氣淨化器 for anyone who is interested in helping make Clean Air available everywhere using open source DIY air purifiers

<#CTMK5QPA8> 口罩地圖&疫情相關

<#C020EQ0R8TW> 疫苗相關

<#C026J0M3EBE> 疫苗預約的導流網站

<#C02JTH1SYUT> 疫情解封後指引

<#C056EHM42B1> 民防

<#C0955CHRVM1> 對台統戰資料庫

<#C082GMDH39S>

<#C01JWUGCS5C> 台灣防空識別區專案

<#C0691H2GSB0> 民防下午茶

<#C047WUCMWEA> 探討 ATAK-CIV 手機軟體應用於防災、民防、戶外任務情境

<#C0711704PHA> Meshtastic Taiwan Community 臺灣鏈網

<#C069DF8GNMR> 任務化提升台灣防災準備

<#C095JBP0NRE>

<#CD9EMS0F3> 地理資訊、地理資料 :earth_asia: Geo-data

<#C0AEYRT8RU3>

<#C07D1NCHBB9> 能源議題頻道

<#CJTBP7YRK> 租屋資料與議題

<#C04PE6MAKQE> Geographic Referencing for Technology Transfer via Bioregional similarity. Aggregating and associative mapping data.

<#C04FQLYGJE6> 人行道行走狀況群眾標註平台

<#C0AMEQWMRM1>

<#C02BVH9569J> 台灣黑熊通報平台 :bear:

<#C02HY3VU9J4> 協尋喵星人 :cat:

<#C18TYPFJ8>

<#CC789GL15> LostSAR 開源搜救應用

<#CN6CT9QG6> 市容通報工具 / Linebot

<#CNA60GZJM> 違章工廠舉報 用 GeoDjango 做 geo spatial query

<#CS13KTC0N>

<#C029FJKJT19> 糖業鐵路產業地景

<#C2YH0QTKJ> 都市計畫 / 都委會會議記錄資料庫

<#CPRDWQ8N4> 公有土地資料與地圖 Public Land Data

<#C05LTMJRJSJ>

<#C06CSUZS23F>

<#C0227C7CSNQ>

<#C09FFUQT1DF> 討論海洋廢棄物

<#C06GFQ8QQ2E> 河流與流域

<#C03A0P6GL> 超農域,農藥查詢系統,南庄桐花松,農業資料與專案 :ear_of_rice: Agriculture related projects

<#CQSH4F276> 遙測算樹、圖資找地、倡議種樹 :deciduous_tree:

<#C0ALS9R0L07>

<#C026NAFMF89>

<#C01DEU4RD3N>

<#C01DYJR28EL> 無麩質安全網 gluten free

<#CUCCDM12R> 食食課課 從食物連結生活與文化

<#C62GMAJ2F> 推動食物分享

<#C053LPLRG2D> 咖啡閒聊 https://naturechats.com/

<#C06QQ5EDQ> about art

<#CU8ULS2UW> 一起來建立吉祥物資料庫~目前已蒐集 1300 多個角色囉! :sun_with_face:

<#CU0HARXS7> 預測與推估內容的結構化 :chart_with_upwards_trend: future predictions

*slack 頻道內容備份網址 (感謝 Ronny !)*

https://g0v-slack-archive.g0v.ronny.tw

*Facebook Group*

.零時小學校 Facebook Group: https://www.facebook.com/groups/240879797438433

.資料申請小幫手 Facebook Group: https://www.facebook.com/groups/2819127468115692/

.【Cofacts 真的假的】編輯交流天地 Facebook Group: https://www.facebook.com/groups/1847232902175197

.開放政治獻金討論: https://www.facebook.com/groups/271528425531357

.g0v 灣區社群: https://www.facebook.com/groups/824770435679041/

*Discord*

.【Cofacts 真的假的】Discord: https://discord.gg/mmZS9sZuau

.確診者足跡地圖 Discord: https://discord.gg/ePKuRGE9sF

.零時小學校 Discord: https://discord.gg/csDjWBbhvf

.島島阿學 Discord: https://discord.com/invite/2NbQ7cu6jH

.UniCourse 大學課程資訊交流平臺 Discord: https://discord.gg/VtFzwAdrXF

.Lipoic 遠距授課與線上教室平台 Discord: https://discord.gg/ArKk54ajfr

.翻轉歷史! 用Minecraft RPG 學歷史吧! Discord: https://discord.gg/e6vhTq43gs

.綠洲計畫 - 特殊選才資訊&經驗分享平台 Discord: https://linktr.ee/lzgh2023

.自學力 製學例 網站 https://www.ability-of-self-studying.tw/

.開源星手村 桌遊製作與推廣 Discord: https://discord.gg/SFY2JwdBr9

.Grapycal 圖形化程式語言 Discord: https://discord.com/invite/adNQcS42CT

.中學資訊討論群 CISC Discord: https://discord.gg/cisc

.Hack It 籌劃高中生的黑客松活動 https://discord.gg/u4z8EFR2gr

.北臺灣學生資訊社群 Discord: https://discord.scint.org/

.中部高中電資社團聯合會議 Discord: https://discord.com/invite/At7r54v94c

.南臺灣學生資訊社群 Discord: https://discord.gg/6QW6gqhHQe

.伴伴學 Discord: https://discord.gg/azQUs8Y2fY

.Code for Taiwan Discord:https://discord.com/invite/pRFjDXeFyv

.Formosa Art Bank 福爾摩沙藝術銀行去中心化治理組織 Discord: https://discord.com/invite/skvQAGPBDV

.GreenSofa 綠沙發 _ 以 Web3 公共財資助台灣 Public Goods 發展 Discord: https://discord.com/invite/skvQAGPBDV

.Twinkle AI Discord: https://discord.gg/Cx737yw4ed

.Code for Korea Discord https://discord.gg/xNNvfhJUV5

- Forwarded from #joinchannel-slack-頻道名稱彙整

- 2026-05-07 02:22:10

chewei 哲瑋

21:50:45

Channel List

頻道傳送門

頻道傳送門

chewei 哲瑋 _

*g0v Slack Channel Guide 頻道傳送門*

.Global & Local:50+ 個頻道

.Infra / G0vernace:50+ 個頻道

.Edu / Learning / Health / Livingl:60+ 個頻道

.Open Gov & Projects:100+ 個頻道

*Global & Local*

<#C02G2SXKX> 社群大廳,可以在這邊提出任何問題! G0v City Hall / Plaza

<#C1CHAA0QL> <#C02G2SXKX> English version

<#CDE487J9K> <#C02G2SXKX> Japanese version

<#CDDNVDT8U> <#C02G2SXKX> Korean version

<#C08JJ5U3LMN>

<#CV4P9953R>

<#C046KHB3FJ4> 嘉義

<#C2A8F3JAH> 台南討論群組!

<#C04SLHPKNV7> 高雄討論群組!

<#C093UNUEDNV> 屏東討論群組!

<#C02QGDPCFGS> 小琉球討論群組!Liuqiu

<#C7KEUPGG1> 台中討論群組!

<#C08NXM19H9V> 彰化討論群組!

<#C08PG7VMKA7> 雲林討論群組!

<#C06BCEL2P3R> 南投討論群組!

<#C0ATM65TJBU>

<#C044J653KLL> 花蓮討論群組!

<#C09A8PB1W7K>

<#C08B4G9UDBQ>

<#C06E8UFE69Z> 金門討論群組!

<#C03JGA6FSKF> 馬祖討論群組!

<#C06PNN6L067> 基隆討論群組

<#C09CF786H7U>

<#C09FH5PDX7C>

<#C0B02271G1J>

<#C0AQAGPV2CT>

<#C06HSQL9C1W> 關於如何移住、回到故鄉,以及城市人如何與地方一起打造新故鄉的實踐 / 頻道有很多關注宜蘭的朋友

<#C08BPK3F3UZ> support for local communities or initiatives 有相關想法與構想,歡迎到頻道討論 ~

<#C5FGJFDMW>

<#CDA9C2JTG>

<#CQDRK4GNM> https://github.com/g0vhk-io

<#C017QFJ5AM8> 飛行傘計畫

<#CHA2QJP9A>

<#C09K81TDZ1N>

<#C012HCFD281>

<#C01TT6ENVKR>

<#C05R1MF0MNY> 泰國與泰語

<#C63V7JB2P>

<#C06J7EG8UAH> 越南與越語

<#C06D4RE849Z>

<#C07J5QD92Q6>

<#C08K2DD907N>

<#C06GQ2UPQ1M> 澳洲

<#C09V6JXP92B>

<#C0A3B3BHXSM>

<#C04A4P821> 想在美國協作的可以來這邊聊天喔!

<#C05FZAX1Z9U> g0v 矽谷灣區小聚

<#C0AL6T9KSKH>

<#C0AAS101065>

<#C096CE120EA>

<#C090GTHDLFL>

<#C034X4820CC>

<#C0AM01D2UGM>

<#C0944NVFKKP>

<#C06K7ST2GMA> 德國與德語區

<#C088ZNXG4PR>

<#C023C1QMKRR>

<#C0AK02J8HSP>

<#C0ALNAV0SAW>

<#C08KSRFBNNM>

<#C0AM5JTGESE>

<#C04F0PN57NV> https://github.com/g0v-it

<#C084CU74J> 國際交流工作小組 g0v international、國際交流資訊都在這

<#C01Q8THBQG6> Connected to Code for Korea & Code for Japan

<#C0616EQS35G> Internet / digital governance

*Infra / G0vernace*

<#CGU1SLHNH> 歡迎自由灌水閒聊 😄 Feel free to chat here.

<#C012AG0SC0H> 歡迎來到 g0v-slack,這是自我介紹的頻道,可以讓大家認識你唷!Welcome to g0v slack! Please introduce yourself to g0v community ❤️

<#C048NKSFZEF> 提供各式各樣的頻道簡介,以及傳送門 G0v slack channels portal.

<#C0149FAJS1L> 令人驚奇的零時政府。找專案,找提案,找共筆,找幫手 一站搞定

<#C082BAJ7E90> 協助坑主推展專案,整理相關執行經驗與建議 Assist project leaders in promoting their projects, organizing related execution experiences and suggestions

<#C07R2PMV7K2>

<#C090XEG90F8>

<#C08FQ844DLK> NPOHUB 大小事

<#C0385B90D> 揪松團相關活動討論(黑客松、基礎松)https://jothon.g0v.tw/

<#C08KQGMK1NE> 蒐集線上活動籌辦事務

<#C08BPK3F3UZ>

<#C06ARNDP3CN>

<#C0AKDCAC0EA> 影響力評估

<#C08LV45P87L> 社群護照

<#C0AF1QXKKE1>

<#C087CSHFK53> 申請擺攤、出攤協力

<#C0ASRQF7AUA>

<#C088N2XFVA8>

<#C06V97CAH19>

<#C073T6J5J2Z>

<#CBNQXSAP7> 翻譯頻道 i18n + l10n—translate everything.

<#C0483Q7ALN6> 社群翻譯語彙庫 (glossary),從軟體在地化需求出發,收集個別社群成員、在各自筆譯/口譯/翻譯專案使用的詞彙前進,並希望收錄不只一個語言。

<#C04L3MK0K1V> 專案取名稱的互助頻道 Channel for Project Naming

<#C05CPF3DG1E> 諧音梗交流 Pun & Fun

<#C06CMKFCM96> 心情抒發頻道 🙂

<#C9WFAPPV5> 致力於新參者的體驗流程 Dedicated to Newcomer Experience Flow

<#C05326H3S72> g0v AI 機器人 aka 找專案分類帽

<#C069ARZJNE9> 社群虛擬記者分享社群動態

<#C01KQ3ES98U> 自動履歷製造機(with hello g0v)

<#C03PL9TK83A> 零時先輩

<#C0386M58S> 社群基礎建設開發維護,一起來協力! Community Infrastructure Development & Maintenance

<#C433NEJSJ> g0v 各網站的狀態通知頻道

<#CF3JH3H1C> g0v 網域大小事、網域申請

<#CSGKA8G75> g0v GitHub 大小事

<#C0G0T65S9> 這是一個放 UI 的地方,有 github

<#C01RDCVDGHZ> g0v slack 大小事、申請 APP、治理機制討論

<#CV97224UW>

<#C01SHPD80UD> 跨平台訊息流通工具 Cross-Platform Information Flow Tools

<#C04HYS66X1D> 討論 Mastodon 伺服器

<#CBLASC4CF> HackMD 使用經驗與課題回報

<#CHPAZECAV> 討論社群治理 Discussion on Community Governance

<#C04S9VBTQCV> g0v.tw 網站社群治理,以及社群活動刊登至日曆的提案頻道

<#CE2HFQN67> 以 g0v 為主的學術研究 Academic research on g0v and community projects

<#C04HYS66X1D> creating g0v.social, a decentralized social network

<#CPKVDVD88> g0v sns 平台規範、發文討論區

<#C088TR4A709> 揪松團的 SNS 與受眾溝通

<#C30F846JU> g0v news

<#C02QA1JNHAR> g0v underground 零時電台 https://linktr.ee/g0vpodcast

<#CQZ8MV7A8> g0v Summit 年會的公開頻道 https://summit.g0v.tw/

<#C036C0ACSQ7> 十週年活動籌劃頻道 https://10th.g0v.tw/

<#C069MJZV85A> 摩茲工寮社群空間 https://moztw.org/space/

*Edu / Learning / Health / Living*

<#C0960M3B30V>

<#C8DEZ566S> 萌典、字典

<#C11GNUL95> 阿美語萌典

<#C0N9DK6JU> 愛台語 對漢字佮台羅誠熟手,做伙鬥校對巡喲

<#CD75A171D> ChhoeTaigi 台語辭典⁺

<#C075YHE8L06> 矽谷圖書館台灣書籍植入計畫 Silicon Valley Library Taiwan Book Insertion Project

<#C0AUQDSFYGG>

<#C08NDP85ASF> 民國 130 年的全國小學生人數推估湧現地圖,推估未來學校就學人口,探討校地轉型與民主辦學 A map projecting the distribution of Taiwan’s elementary school population in 2040

<#CN64A1FHA> 零時小學校「開源協作與教育工作」主頻道

<#C0250L50324> 公民科技貢獻者的專案與任務視覺化平台

<#C028JBN5H2B> 伴伴學社群頻道

<#C015L48LHRQ> 小草書屋的「幸福存摺」專案

<#C0182TQTVV2> 島島阿學 slack 網址:https://bit.ly/3yg5cFi

<#C0954PJJM55>

<#C01D21G7F0E> CoTeach 教案資源共享平臺

<#C024NAMF0CV> CourseAPI 開放式課程資訊匯流學院

<#C03GRV696RG> Lipoic 是一個致力於整合與改善遠距授課與線上教室的教育平台,並讓學生能不受空間限制學習知識,老師能更便利地傳授知識,並且我們也熱衷於開放文化的精神,與我們一起翻轉教育吧!

<#C06CQHHL0SW> 討論各校學生自治組織經驗

<#C06CQH8TNKD>

<#C03D2FWM57X> 社團招商與管理專案

<#C03EPT3A01E> 壓力排解平臺專案

<#C03E2FCP46Q> 高中歷史筆記共筆

<#C03DTBP3HGW> 文學創作網站—廢青天地

<#C03E2EZRB8C> 「職」凱瑞你 Carry your career

<#C03DX829G05>

<#C03E05DF935>

<#C03DX7X45EH> 校園營養午餐剩食計畫

<#C02RGBHUYKS> 高中生108課綱教育資源整合平台

<#C03DC2G2JFM> 108課綱學習歷程求生指南

<#CUCCK9353> 協助學生提高事務掌握能力

<#CL96QTF5G> 回音森林 語言發音校正 app

<#C068EDJ40V9>

<#C027VH2GXNW> 零時小學校 營隊活動頻道

<#C01JVF9FUKB> 零時小學校 講師與教案交流頻道

<#C01PLLJJP51>

<#C05475LAQ6B>

<#C04N42FJWHH> 大學課程資訊交流平臺

<#C04Q5NG2XT9> 臺大相關課程活動的頻道

<#C05AV83UHM1>

<#C0G0478DC>

<#C03859QD7> 設計師頻道

<#C5EEC5EEN>

<#C9V3KLLGH>

<#C06181VGWH2> 互動式 Linux 指令學習網站

<#C05BHSUT0TS>

<#C09ACNRUES1> 函數式程式設計 (Functional Programming) 交流社群,每月舉辦活動哦 !

<#C0923BV964F>

<#C055ZJBKZML>

<#C05PNGXC4KU>

<#C057R6D8UKE>

<#C06D320J537>

<#C02L3PNNV> 開源人年會

<#C0A04TMPP2A> 由青少年打造的創意程式社群 https://www.hackit.tw/zh-TW

<#C0241463T47> 開源跨平台串流媒體和錄影程式 obs 技術交流頻道

<#C09FAN97H> 零時樂團 :musical_score:

<#C09QQEHGYJU> 結合人工智慧(AI)與音樂理論的和聲學分析應用程式

<#CF5CMMSDS>

<#C022NUX0Z8D> 宅在家的生活資訊

<#C022299HZPC> 健康議題、健檢

<#C0999FDUDMW> 藥品仿單易讀化

<#C0136MDHEMB> 非官方健保卡讀卡機元件開發計劃

<#C4M4S24NS> 心理健康資源

<#C06LFU2NDD0>

<#C01LWGSJLDT>

<#C096TGRJTHP>

<#C87MZ9SUR> 動一動

<#C2Z5JG9G8> for hiking affair

<#C04AXQQQDEF> :camping: 全台露營區合法共 205 筆資料與線上地圖

<#CQASS2PL7> NGO 會務交流

*Open Gov & Projects*

<#C082GPK1Y1F> 數位民主研究案工作小組 g0v Digital Democracy Working Group

<#CUM4P4895> 關注台灣「開放政府行動方案」制定 Focus on Taiwan's "Open Government Action Plan"

<#C05V224CZKL> Public Money Public Code

<#CH79PR9FY> #金流百科(政治獻金、公投募集經費、標案、視覺化)

<#C067VNP6P88> 選舉相關的專案或想法

<#C040Y313L6P> 蒐集選舉看板

<#C048CBS8TUY> 候選人政見彙整平台

選前大補帖,專案另有設置 Slack https://join.slack.com/t/taiwanvotingguide/shared_invite/zt-20t7r7oo3-KmgRaARq_1qHo1VECY8ssQ

<#C0525UB7JCE> 隨著每年的選舉將會逐步更新,歡迎提供使用者回饋、許願!

<#C0AAKG273NH> 憲庭加好友 / 憲法法庭

<#CDRE0Q0CE> g0v 國會松、立法院資料 g0v Congress Hackathon, Legislative Yuan data

<#C0386PRQU> 國會、立法院

<#C03B427TG91>

<#C06HF2GMQSC>

<#C25J1PTFB> 修法協作器

<#C0QHLAGH5> 立委咖電喂

<#CGRJJK0M9>

<#C08F84BQMME> 請支援造冊_立委罷免

<#C04FLCD6YFP> 對刑法上修到二十歲有興趣的朋友歡迎參與喔!

<#C2Q1M4N1J> 法規與議題議論

<#C05MGLXK0AH>

<#C6HN8HRGD>

<#C049V0A9M35>

<#C017GBM9Z2S> 晶片身分證

<#C04P2TY1QSZ> 關注個資隱私議題的頻道 Channel focusing on personal data privacy issues

<#C01GX97MTT8> 想討論台灣已成立的數位發展部嗎

<#C012S9ABTL7> 總統盃黑客松

<#CSFDD01AN> 美國台灣觀測站

<#C081STFCWM7> 北加州國會議員攻略

<#C2PNGP8N8> 國家寶藏,號召志工一起深入世界各地的國家檔案局與圖書館,挖出台灣相關史料,並建立網路開放資料庫,供全民免費使用。This project aims to bring these documents within the sight of the public, so that everyone can forge their own stories that were never told before.

<#C02C6DA2L8Z>

<#CS16Q642K> 東亞女性權力行動歷程書寫 Documentation of East Asian women's empowerment movements

<#C02GD9FD1H8> 國際智庫基本資料 csv

<#C040NMX9UMS> 多語系圖卡

<#CV42198BE> Wikidata Taiwan 討論頻道

<#C0CBAUFV3> 資料

<#C06J59AE9GD>

<#C086LQXUE69> 網路黑魔法防禦術(Defence Against the Dark Arts),對抗詐騙的討論頻道,頻道名稱取自於哈利波特小說中霍格華茲的學校科目。

<#CNYM62P6X> 不實訊息來源蒐整

<#C2PPMRQGP> 真的假的 ! 謠言查證 Linebot :speech_balloon: Cofacts is a collaborative system connecting instant messages and fact-check reports together. It’s a grass-root effort fighting mis/disinformation in Taiwan.

<#C08KQ2HLJA2>

<#C02JTFZRNEP> 永續所得實驗室、永續捐贈議題

<#C2YQT8L4A> 社會福利議題與資料

<#C05DXC8CNGP> 開放企業永續資料庫 - ESG 檢測器

<#C06MKUR9N2F> 我們的目標是為倡議型NGO開拓財源,藉由蒐集企業捐款資訊和潛在合作方向,建立資料庫,為組織提供更多與企業合作的可能性。

<#CQS6318KU> 透明足跡 - 資訊公開透明,污染無所遁形

<#C4Z9BGHPZ> 討論跟勞工相關的公民議題

<#C069J08CXRS>

<#CUTQ3NF4K> ptt 不能亡,新版本測試中

<#CUTEBCBUH>

<#C03RAK46BEC> 零時道 :cat: Supercharge g0v & the future of civic innovation w/ DAO, web3, etc. https://da0.g0v.tw/

<#C046T7HT05U> Studi0 在做 Hypercerts 的 fund 實驗

<#C046ZFGTWQK> 是 incentivize g0v 的演進,做 organic 貢獻系統

<#C046EJH4P2B> 在做公共財與 DAO 的 podcast

<#C049L7M5X9S> 每月持續有讀書會

<#C047A5734FK> 在做 web3 的任務獎勵系統

<#C04FRRV17GF> 在奠定 da0 的基礎設施

<#C051REPUTSS> 是跟 dark matter labs 在AI上的初期對話

<#C04UZ3JNH9A> 在gov自治組織中參與全球去中心化科學浪潮,介紹探索去中心化科學的可能

<#C0525SELHFY> 跟以太坊的PSE合作,講零知識證明

<#C051U8RSTHV> 討論 2023 林茲電子藝術節奧地利辦黑客松

<#C05BDAUDGP8> 這是一個關注數位科技如何應用於「場所營造」(place-making)的小坑洞。名字是日文「地方」之意,常見於台灣也很熟悉的「地方創生」(ちほうそうせい, chihō sōsei)。

<#C0483Q7ALN6> 軟體在地化.翻譯詞彙庫計畫

<#C59M1NZV2> middle2 是一個開放原始碼的 PaaS 平台

<#C04GALK46SC> 推廣自架伺服器

<#C012MPC6GQ4> AWS 使用者交流頻道

<#C091S7KH8> g0v零時空污觀測網開發

<#C055GLJS94G> 開源 DIY 空氣淨化器 for anyone who is interested in helping make Clean Air available everywhere using open source DIY air purifiers

<#CTMK5QPA8> 口罩地圖&疫情相關

<#C020EQ0R8TW> 疫苗相關

<#C026J0M3EBE> 疫苗預約的導流網站

<#C02JTH1SYUT> 疫情解封後指引

<#C056EHM42B1> 民防

<#C0955CHRVM1> 對台統戰資料庫

<#C082GMDH39S>

<#C01JWUGCS5C> 台灣防空識別區專案

<#C0691H2GSB0> 民防下午茶

<#C047WUCMWEA> 探討 ATAK-CIV 手機軟體應用於防災、民防、戶外任務情境

<#C0711704PHA> Meshtastic Taiwan Community 臺灣鏈網

<#C069DF8GNMR> 任務化提升台灣防災準備

<#C095JBP0NRE>

<#CD9EMS0F3> 地理資訊、地理資料 :earth_asia: Geo-data

<#C0AEYRT8RU3>

<#C07D1NCHBB9> 能源議題頻道

<#CJTBP7YRK> 租屋資料與議題

<#C04PE6MAKQE> Geographic Referencing for Technology Transfer via Bioregional similarity. Aggregating and associative mapping data.

<#C04FQLYGJE6> 人行道行走狀況群眾標註平台

<#C0AMEQWMRM1>

<#C02BVH9569J> 台灣黑熊通報平台 :bear:

<#C02HY3VU9J4> 協尋喵星人 :cat:

<#C18TYPFJ8>

<#CC789GL15> LostSAR 開源搜救應用

<#CN6CT9QG6> 市容通報工具 / Linebot

<#CNA60GZJM> 違章工廠舉報 用 GeoDjango 做 geo spatial query

<#CS13KTC0N>

<#C029FJKJT19> 糖業鐵路產業地景

<#C2YH0QTKJ> 都市計畫 / 都委會會議記錄資料庫

<#CPRDWQ8N4> 公有土地資料與地圖 Public Land Data

<#C05LTMJRJSJ>

<#C06CSUZS23F>

<#C0227C7CSNQ>

<#C09FFUQT1DF> 討論海洋廢棄物

<#C06GFQ8QQ2E> 河流與流域

<#C03A0P6GL> 超農域,農藥查詢系統,南庄桐花松,農業資料與專案 :ear_of_rice: Agriculture related projects

<#CQSH4F276> 遙測算樹、圖資找地、倡議種樹 :deciduous_tree:

<#C0ALS9R0L07>

<#C026NAFMF89>

<#C01DEU4RD3N>

<#C01DYJR28EL> 無麩質安全網 gluten free

<#CUCCDM12R> 食食課課 從食物連結生活與文化

<#C62GMAJ2F> 推動食物分享

<#C053LPLRG2D> 咖啡閒聊 https://naturechats.com/

<#C06QQ5EDQ> about art

<#CU8ULS2UW> 一起來建立吉祥物資料庫~目前已蒐集 1300 多個角色囉! :sun_with_face:

<#CU0HARXS7> 預測與推估內容的結構化 :chart_with_upwards_trend: future predictions

*slack 頻道內容備份網址 (感謝 Ronny !)*

https://g0v-slack-archive.g0v.ronny.tw

*Facebook Group*

.零時小學校 Facebook Group: https://www.facebook.com/groups/240879797438433

.資料申請小幫手 Facebook Group: https://www.facebook.com/groups/2819127468115692/

.【Cofacts 真的假的】編輯交流天地 Facebook Group: https://www.facebook.com/groups/1847232902175197

.開放政治獻金討論: https://www.facebook.com/groups/271528425531357

.g0v 灣區社群: https://www.facebook.com/groups/824770435679041/

*Discord*

.【Cofacts 真的假的】Discord: https://discord.gg/mmZS9sZuau

.確診者足跡地圖 Discord: https://discord.gg/ePKuRGE9sF

.零時小學校 Discord: https://discord.gg/csDjWBbhvf

.島島阿學 Discord: https://discord.com/invite/2NbQ7cu6jH

.UniCourse 大學課程資訊交流平臺 Discord: https://discord.gg/VtFzwAdrXF

.Lipoic 遠距授課與線上教室平台 Discord: https://discord.gg/ArKk54ajfr

.翻轉歷史! 用Minecraft RPG 學歷史吧! Discord: https://discord.gg/e6vhTq43gs

.綠洲計畫 - 特殊選才資訊&經驗分享平台 Discord: https://linktr.ee/lzgh2023

.自學力 製學例 網站 https://www.ability-of-self-studying.tw/

.開源星手村 桌遊製作與推廣 Discord: https://discord.gg/SFY2JwdBr9

.Grapycal 圖形化程式語言 Discord: https://discord.com/invite/adNQcS42CT

.中學資訊討論群 CISC Discord: https://discord.gg/cisc

.Hack It 籌劃高中生的黑客松活動 https://discord.gg/u4z8EFR2gr

.北臺灣學生資訊社群 Discord: https://discord.scint.org/

.中部高中電資社團聯合會議 Discord: https://discord.com/invite/At7r54v94c

.南臺灣學生資訊社群 Discord: https://discord.gg/6QW6gqhHQe

.伴伴學 Discord: https://discord.gg/azQUs8Y2fY

.Code for Taiwan Discord:https://discord.com/invite/pRFjDXeFyv

.Formosa Art Bank 福爾摩沙藝術銀行去中心化治理組織 Discord: https://discord.com/invite/skvQAGPBDV

.GreenSofa 綠沙發 _ 以 Web3 公共財資助台灣 Public Goods 發展 Discord: https://discord.com/invite/skvQAGPBDV

.Twinkle AI Discord: https://discord.gg/Cx737yw4ed

.Code for Korea Discord https://discord.gg/xNNvfhJUV5

- Forwarded from #joinchannel-slack-頻道名稱彙整

- 2026-05-07 02:22:10

王家緯(Kevin Wang)

22:20:12

@dan.wang8250 has joined the channel

2026-05-24

雪泥

00:34:03

明天天氣炎熱

雪泥

00:34:03

明天天氣炎熱

雪泥

00:34:48

記得多喝水唷6ω6

hongkong.outlanders

07:22:45

@hongkong.outlanders has joined the channel

雪泥

08:24:47

g0v Summit 2026 大會接駁車 營運中

雪泥

08:24:47

g0v Summit 2026 大會接駁車 營運中

- ❤️1

- 🚌1

雪泥

08:27:18

請於 *捷運南港站/台鐵南港車站/高鐵南港站* 靠 *市民大道* 側公車站等候

雪泥

08:27:18

請於 *捷運南港站/台鐵南港車站/高鐵南港站* 靠 *市民大道* 側公車站等候

雪泥

08:27:44

公車站牌名稱:*南港車站*

雪泥

08:27:44

公車站牌名稱:*南港車站*

pipperl

08:30:46

您好,請問下午回程最後一班發車時間是? (昨天看到有人沒搭到…)

雪泥

08:33:22

我不太清楚

雪泥

08:33:29

幫您詢問

david lin

08:35:11

@aitpmc103 has joined the channel

雪泥

09:12:08

大約 18:15 唷

wang wade

09:24:02

@weide220 has joined the channel

pei

09:44:54

@pei has joined the channel

雪泥

09:51:28

g0v Summit 2026 大會接駁車 *末班車*

雪泥

09:51:28

g0v Summit 2026 大會接駁車 *末班車*

雪泥

09:51:32

10:10 準時發車

雪泥

09:51:32

10:10 準時發車

雪泥

09:53:08

請提早至公車站等候

雪泥

09:53:08

請提早至公車站等候

雪泥

10:02:59

g0v Summit 2026 大會接駁車 *末班車*

雪泥

10:02:59

g0v Summit 2026 大會接駁車 *末班車*

雪泥

10:03:04

已經抵達

雪泥

10:03:04

已經抵達

雪泥

10:03:28

還沒上車的旅客請儘速上車

雪泥

10:03:28

還沒上車的旅客請儘速上車

雪泥

10:10:42

g0v Summit 2026 大會接駁車 *末班車*

雪泥

10:10:42

g0v Summit 2026 大會接駁車 *末班車*

雪泥

10:10:48

已經發車

雪泥

10:10:48

已經發車

雪泥

10:11:04

還沒上車的旅客請自行前往會場

雪泥

10:11:04

還沒上車的旅客請自行前往會場

chiuyas

10:51:42

@chiuyas has joined the channel

issacbyming

11:12:26

不知道直播是不是出了什麼狀況?

s8012531

14:04:48

@s8012531 has joined the channel

chewei 哲瑋

16:32:54

[活動] ∆ 5/31 g0v 動手自造黑客松,歡迎報名參加 ~ g0v Braiding our Future Hackath73n ✓ 本次活動名稱取自 g0v Summit 官網「不坐等、不依賴,我們反客為主,親自動手自造」

g0v Hackath73n 5/31 週日 @g0v台北社群空間,重慶南路三段2號2樓

https://g0v-jothon.kktix.cc/events/g0v-hackath73n

https://g0v.hackmd.io/@jothon/g0v-hackath73n/

g0v Hackath73n 5/31 週日 @g0v台北社群空間,重慶南路三段2號2樓

https://g0v-jothon.kktix.cc/events/g0v-hackath73n

https://g0v.hackmd.io/@jothon/g0v-hackath73n/

chewei 哲瑋

2026-05-25 23:46:41

另外票顯示為

2026/05/01 00:00(+0800) ~ 2026/05/31 12:00(+0800)

我好像沒有權限修改

2026/05/01 00:00(+0800) ~ 2026/05/31 12:00(+0800)

我好像沒有權限修改

chewei 哲瑋

2026-05-27 20:44:33

上面這個是「開放報名的日期區間」

chewei 哲瑋

16:32:54

[活動] ∆ 5/31 g0v 動手自造黑客松,歡迎報名參加 ~ g0v Braiding our Future Hackath73n ✓ 本次活動名稱取自 g0v Summit 官網「不坐等、不依賴,我們反客為主,親自動手自造」

g0v Hackath73n 5/31 週日 @g0v台北社群空間,重慶南路三段2號2樓

https://g0v-jothon.kktix.cc/events/g0v-hackath73n

https://g0v.hackmd.io/@jothon/g0v-hackath73n/

g0v Hackath73n 5/31 週日 @g0v台北社群空間,重慶南路三段2號2樓

https://g0v-jothon.kktix.cc/events/g0v-hackath73n

https://g0v.hackmd.io/@jothon/g0v-hackath73n/

chewei 哲瑋

2026-05-25 23:46:41

另外票顯示為

2026/05/01 00:00(+0800) ~ 2026/05/31 12:00(+0800)

我好像沒有權限修改

2026/05/01 00:00(+0800) ~ 2026/05/31 12:00(+0800)

我好像沒有權限修改

chewei 哲瑋

2026-05-27 20:44:33

上面這個是「開放報名的日期區間」

chewei 哲瑋

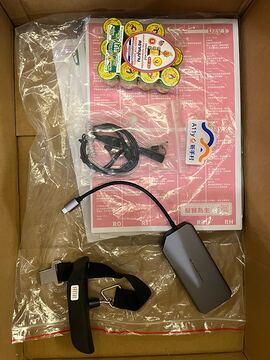

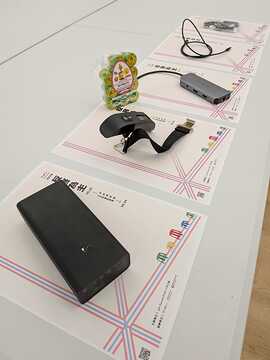

20:36:45

Summit 2026 遺失物,請失主聯繫 <#C0385B90D> 揪松團,物品目前在 台北市中正區重慶南路三段2號,您也可以考慮 5/31 週日參加 g0v 黑客松 順便取貨

• 行動電源

• 轉接器

• Type C 傳輸線

• 電子秤重器

• 耳麥線

• 泰國虎猴牌藥草鼻通膏

物品照片

https://photos.app.goo.gl/MJeHDnjcPxbG1NUH7

• 行動電源

• 轉接器

• Type C 傳輸線

• 電子秤重器

• 耳麥線

• 泰國虎猴牌藥草鼻通膏

物品照片

https://photos.app.goo.gl/MJeHDnjcPxbG1NUH7

- 2

- 👍1

@chewei 虎牌膏藥是泰國朋友送給 Summit 工作人員的,請先幫我跟 Summit 東西一起收起來我們檢討會發~

ballfish

2026-05-25 09:35:27

另有一個遺失物,可能是工人或講者的茄子袋(因為都一樣就不放照片了)

也會一起拿去,但應該就會將裡面的衣服和茄子袋回收

也會一起拿去,但應該就會將裡面的衣服和茄子袋回收

行動電源跟type-c傳輸線是我的😢 可以大松取貨 感謝

RS

22:00:02

@chewei 虎牌膏藥是泰國朋友送給 Summit 工作人員的,請先幫我跟 Summit 東西一起收起來我們檢討會發~

2026-05-25

RS

00:07:12

年會影片會剪輯完後釋出!ᶘ ᵒᴥᵒᶅ

RS

00:14:44

感謝各位參與今年的 g0v Summit!希望這兩天大家有充電到,也有碰到有趣的人事物!

有空的話還請幫我們填寫會後問卷:https://forms.gle/civkS5TfNSFDaNaZ6

我們大松見啦( ´▽` )ノ

有空的話還請幫我們填寫會後問卷:https://forms.gle/civkS5TfNSFDaNaZ6

我們大松見啦( ´▽` )ノ

- 6

3

3

RS

00:14:44

感謝各位參與今年的 g0v Summit!希望這兩天大家有充電到,也有碰到有趣的人事物!

有空的話還請幫我們填寫會後問卷:https://forms.gle/civkS5TfNSFDaNaZ6

我們大松見啦( ´▽` )ノ

有空的話還請幫我們填寫會後問卷:https://forms.gle/civkS5TfNSFDaNaZ6

我們大松見啦( ´▽` )ノ

ballfish

09:35:27

另有一個遺失物,可能是工人或講者的茄子袋(因為都一樣就不放照片了)

也會一起拿去,但應該就會將裡面的衣服和茄子袋回收

也會一起拿去,但應該就會將裡面的衣服和茄子袋回收

Eli

21:30:03

行動電源跟type-c傳輸線是我的😢 可以大松取貨 感謝

huang

22:32:03

@wanjei has joined the channel

Win

22:33:12

@han20011222 has joined the channel

chewei 哲瑋

23:46:41

Replied to a thread: 2026-05-24 16:32:54

g0v-jothon.kktix.cc

歡迎參加 5/31 週日 g0v 黑客松!:milky_way: Hacking +:zap: Learning!

- 1

1

1

2026-05-26

0x13equity

08:35:26

@0x13equity has joined the channel

oscar52397

10:30:57

@oscar52397 has joined the channel

Seasu Wang

13:33:42

@seasuwang has joined the channel

김영환

14:03:13

@kimyounghwan06271990 has joined the channel

525hahahaha

19:19:23

@525hahahaha has joined the channel

2026-05-27

ab2001119

02:03:52

@ab2001119 has joined the channel

ky

17:07:31

Replied to a thread: 2026-05-24 16:32:54

另外票顯示為

2026/05/01 00:00(+0800) ~ 2026/05/31 12:00(+0800)

我好像沒有權限修改

2026/05/01 00:00(+0800) ~ 2026/05/31 12:00(+0800)

我好像沒有權限修改

chewei 哲瑋

20:44:33

上面這個是「開放報名的日期區間」

李昀臻

21:07:28

@b13401102 has joined the channel